Category Archives: Stuff

BetaPrusaV2 3D printer kit a Reprap Prusa i2+ Part 1

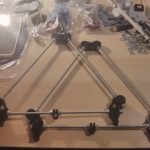

I am currently assembling a reprap 3D printer.

- Prusa – first triangle

- Prusa – and a second one

- Prusa – y Axis connectors

- Prusa – y + z axis connectors

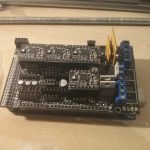

- Prusa – Arduino Mega with stepper motor driver shield

- Prusa – board for the power supply

- Prusa – screws , nuts and washers

- Prusa – 5 stepper motors

- Prusa – plasic parts are 3D printed itself

- Prusa – kepton tape

- Prusa – extruder unit with some heating marks

- Prusa – z axis motor fix

- Prusa – x axis motor fix

- Prusa – frame

- Prusa – y axis linear bearings

Howto flash an image to Raspberry Pi or Banana Pi using dd and a progressbar

Most tools don’t show reliable progress informationwhen flashing an operating system to an ssd card. In case you use dd to copy, this issue can be solved by the nice pv tool with:

pv -tpreb /path/to/image.img | dd of=/dev/yourUSBorSDSlotTarget bs=1M

which results in:

And really lighten up the time when flashing your Pi devices like Raspberry Pi or BananaPi.

ROS presentation recommendation

There is a nice talk about ROS hold by Andreas Bihlmaier presented on the 31. Chaos Communication Congress recently. It introduces the most important parts of ROS to a beginner and explains why the high learning curve is worth the effort.

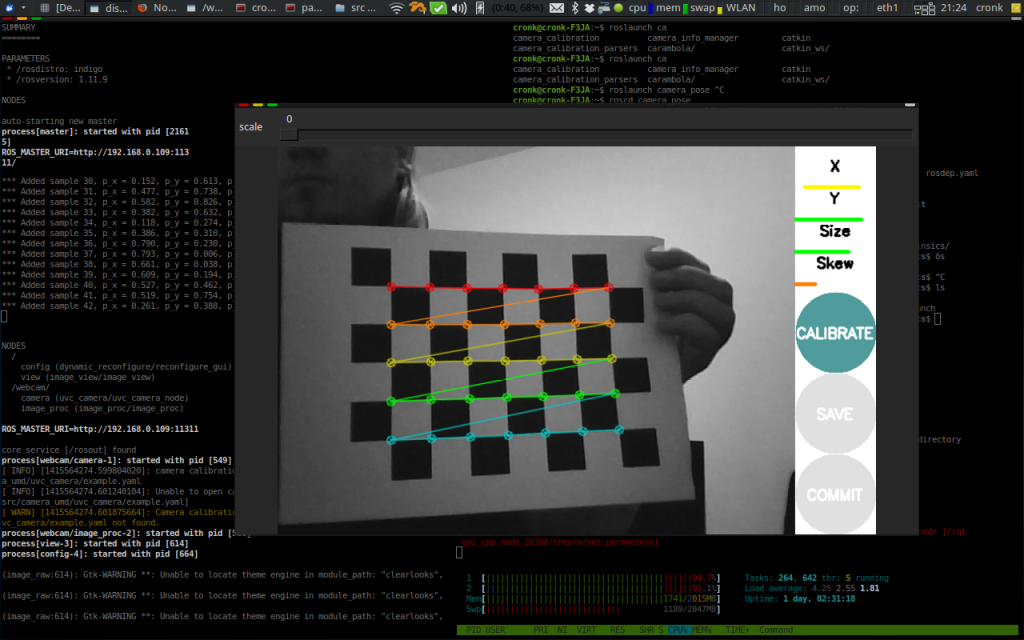

Status Update: Calibrating for Depth

Currently I am experimenting with stereo vision usb webcams, where an essential step is good calibration. It felt a bit strange to see the image feature detection working on live data:

I’ll try to form the gained experiences into a small step by step guide and probably a github repository soon. Currently I am aiming towards depth data gained by webcams only, especially to compare the results to previous setups, and to see if visual odometry can be an option in low cost environments.

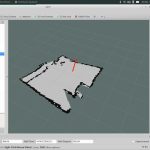

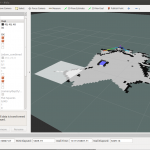

Low cost Hector_mapping with Xtion, 9DRazor IMU and no hardware odometry

This weekend I had the chance to indoor slam by simply walking through my flat with an [amazon asin=B005UHB8EK&text=Asus Xtion] (150 EUR), an 9DRazor (+3.3 FTDI and Cable around 100 EUR) and a common [amazon asin=B004URCE4O&text=Laptop].

By setting up ROS Indigo and using existing software I now can create a simple 2D map of my flat and thanks to the PhD Programm Heterogeneous Cooperating Teams of Robots (Hector) of the TU-Darmstadt, which I slightly modified to fit the low cost setup, the results are quite impressive.

- Starting in a square room, nearly led to correct results

- leaving the room entering the hall/corridor

- quickly mapping two more rooms

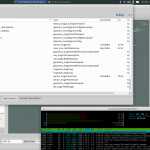

The Xtion is not capable of delivering a 360 degree view, which is why i needed to walk slowly. By changing the setup from a weak ARM to an powerful Intel i5, data rates and size was way better than the aMoSeRo was capable of:

- my test setup

- FTDI 3.3V Controller

- performance of data aggregation is not longer and issue o/

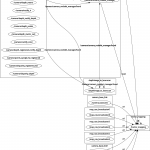

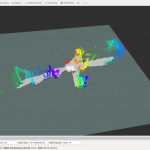

For the ROS interested folks here some ROS related graphs:

- ROS TF Tree of the setup

- ROS Node Graph of the setup

- ROS Topics Graph of the setup

It has been a weekend project, therefore the source and some semantic things are not beautiful but working. E.g. the TF Tree is statically imitating the suggested setup on the Hector Wiki.

Maybe we could profit from using two Xtions and merging the /scans together. By that we would achieve a 300 EUR replacement of a at least 1000 EUR 2D Laser Scanner and would be able to 3D PointCloud everything later.

Thesis passed.

I finished my thesis and passed. As a consequence, I soon will publish my work on this website. Unfortunately the German laws according to copyright forbid to use pictures taken by others without according licenses or paying money. I am currently thinking of replacing certain pictures by a bit worse, but free versions of them, before uploading it. I maybe also will divide the 50 pages into semantic parts and publish is as static part of the website soon.

Colloquium

I am going to demonstrate the complete thesis and the aMoSeRo on:

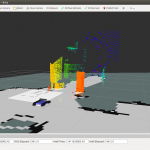

aMoSeRo – 3D Cheese!

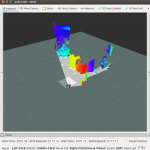

I have implemented a 3D photo function that is computational not very intensive. It gets triggered by pressing a key when a snapshot is wanted. By that it is possible to add a big Point Cloud around the map as you can see here:

- 3D Mapping: First steps

- 3D Mapping: crossing a door

- 3D Mapping: colors are still quite random, but changes to that are planned

Now writing needs to be finished, code needs to be commented and cleaned up – last two weeks already begun.

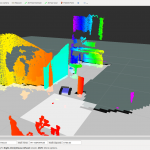

aMoSeRo – mapping the reality

Today is the day of the first accurate aMoSeRo map. I tried several slamming algorithms e.g. the hector_mapping package, but data has been to bad. So after reviewing nearly all my code, fixing a lot of unit issues and publishing rates, today the first map has been created, which really is a map of the place I am living!

- Mapping: faulty sensor data lead to strange rooms

- Mapping: First experiments… …at least the walls are not round anymore…

- Mapping: the journey begins

- Mapping: all the well measured way

- Mapping: up to the next rooms

- Mapping: Point cloud data is possible, but only while standing (computation issues)