This surely doesn’t look amazing from a external look – but this has been a day of hard work and was very important.

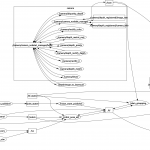

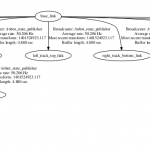

- first EKF – the package graph

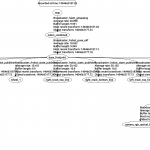

- first EKF – the new TF-Tree

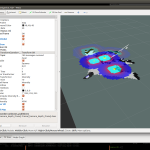

- first EKF – slamming, mapping

The robot_pose_ekf package is working! It is neither the setup I am going to use nor is it very stable – but it proves some points.

First I needed to write my own IMU driver for ros – 9 degrees of freedom (DOF) for 30€ and a bag of problems. This is a lot cheaper (around 100€) than the often used Razor IMU of sparkfun with existing ROS code, and exactly 3DOF better than the WiiMote (with Motion+ and also about 60-70€) I have been experimenting with. There is still a lot of work left for improving the stability, the calibration of the LSM9DS0 and there has been a lot more to find out about magnetic fields and strange units that needed to be converted in other ones than I would have ever expected – but so far the /imu_data topic serves some not totally wrong data – therefore normalisation minimizes the issues with the 3-5 scales per axis I had to deal with.

Next I needed to increase my bad odometry sources – if not by quality at least with quantity – and added a GPS sensor as /vo topic. The CubieTruck is a abstruse while handling some easy things like using one of the possible 8 UART connections… for today I’ve not managed to get it running with UART but a external serial usb controller. Another area that needs heavy improvement…

Finally I had to deal with the REP105 issue that I have been describing in a previous post. The gmapping algorithm needed to get adjusted parameters, coming along with some serious confusion generated by inconsistent syncing over my 4 working devices (rosBrain with roscore and slamming, rosDev – my development machine, the aMoSeRo and the seafile backup server)…

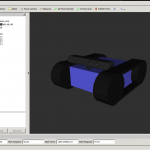

…but after that all parts worked together for the first time, including a simulated aMoSeRo moving on screen while beeing hold in different angles in the real world.

Everything is still far from done – but right on the way – and we’ve already found out how wide the road is 🙂